Emotional attachment to artificial intelligence: new trend?

by Aurelie Jean and Mark Esposito | LSE Business Review (London School of Economics)

Interactions increasingly personalized with generative artificial intelligence tools are transforming the economic model of digital platforms. Instead of just capturing attention, chatbots begin to explore emotional ties with users, creating unprecedented challenges for society and regulation.

The advancement of generative artificial intelligence in all sectors is leading to new products and services, new ways of working and new uses in all aspects of our personal and professional lives.

These AI solutions will undoubtedly give rise to new revenue generation models.

But beyond the current model that bets on the user’s attention, something specific to the Generative AI is emerging: The economy of attachment.

And there are risks and threats of artificial emotional attachments to humans and society in general.

The attention model of artificial intelligence

THE Care Economy Revenue Model It depends on the ability to engage the user and capture their attention as quickly and as long as possible.

This is the case of the vast majority of social networks like X, Instagram, Facebook, Tiktok and Snapchat, as well as dating apps and professional network websites like LinkedIn.

The recommendation algorithms used by these platforms suggest content with which users agree, ideas in which they believe, and transgressive or controversial topics with significantly greater viralization potential.

The directed recommendation depends, among other things, on filter bubbles.

Social networks segment users and filter the content that each person sees according to their previous behavior on the platform.

It is for this reason that a terralanist is more likely to see content that defends the theory of the conspiracy of the flat earth.

The threats of the attention model

The scientific community has come, widely documented, to consensus on the threats and consequences of the attention model.

These include abuse of influence, opinion manipulation, nervous and emotional fatigue and even dependence and depression.

Many scientists propose concrete and practical solutions.

These include the revision of the revenue model, the significant change in the recommendation algorithms and the improvement of AI governance, with emphasis on good design and testing practices.

Read too | Wired and Business Insider magazines are deceived by AI and remove articles written by freelance ‘ghost’

New human beings

From November 2022, the situation began to change, with the release of version 3.5 of the Chatgptwith rapid mass adoption.

Since then, numerous other general AI tools, such as Gemini, Le Chat, and DeepSek, have become available.

These new options have changed the way we consume and process information, starting with how we do online research.

Previously, we used to type a few keywords in a search mechanism bar and received a response filtered by a selection algorithm, such as the PageRank from Google.

Today we add queries in the form of one or more phrases in a message box and get an answer produced by a generative algorithm.

These new tools strongly influence our interactions with the machine that should help us.

The Eliza Effect and the Personification of the AI

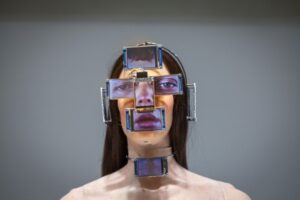

In practice, by the way technology is projected and the use of anthropomorphic language elements, there is a tendency to personify the AI tool.

The choice of small squares or points, which appear at a certain frequency while the algorithm is looking for an answer, gives the impression that a human behind the screen is typing the answer on a keyboard.

These design choices encourage, not without danger, the Eliza effect, whose denomination goes back to the first chatbot created in 1966, and which refers to a spontaneous emotional response to a machine that resembles us.

The consequences are numerous. More and more people are using, not without risk, these conversation AI applications as confidants or even therapists.

Medically supervised research is also being conducted on its use for patient treatment.

An emotional attachment is gradually built between the general and the user, which obviously modifies the economic model of these tools, with potentially harmful consequences for the user.

Read too | What does veganism have to do with AI? Research shows that reasons for rejecting technology can be the same

Emotional attachment to artificial intelligence produces more engagement

Through increasingly frequent and longer use, people tend to create an emotional attachment to AI conversation agents due to the sophistication of interactions and the degree of exclusivity and intimacy they bring, even if it is artificial.

The Eliza effect then becomes a means of attracting and, above all, retaining users for as long as possible through the quality (again, artificial) of the exchanges.

People have the impression that they are interacting with another human being who seems to understand them.

Artificial attachment produces more engagement by triggering an emotional void that can lead to addiction and increase the number of man-machine exchanges.

Data on these exchanges can be used to enrich the training and validation database, or as behavioral data that could be marketed for personalized and targeted marketing, for example.

Of the model of attention to attachment to artificial intelligence

AI tool owners can decide to design general conversational AI applications to strengthen this emotional attachment with the user over time.

The increasing customization through AI would facilitate this attachment. THE attention historically used as an driver of user attraction and retention would be gradually replaced by the emotional attachment.

THE attachment It may have much larger financial returns than the care -based model, with similar or even larger threats.

The threats of the emotional attachment model

The damage to people’s mental health and manipulation and influence on their thoughts for marketers or politicians are some of the risks of the emotional attachment.

Unlike social networks, which involve interactions with other humans, the general conversational AI is limited to interactions between humans and machines.

Human machine attachment risks further isolate people from other humans, which can create a difficult empty to manage.

An artificial “relationship” compatible with machines does not carry frustration and no remarkable contradiction.

Thus, over time, people can be reversalized.

Regulating the design of the Generative AI

After discussions on how to legislate design and use of AI, we must now consider proactively the changes made in the economic model of these platforms to regulate their development.

An important step is to regulate design considering the effects of induced emotional dependence, among other things, by the Eliza effect.

We are gradually going through a attention saving for a attachment economy, whose mechanisms must be studied and for which we must make ethical and legal decisions.

This article was originally published on London School of Economics’s LSE Business Review, and is republished here under Creative Commons license.

About the authors